Closing the Sim-to-Real Gap for Ultra-Low-Cost, Resource-Constrained, Quadruped Robot Platforms

Abstract

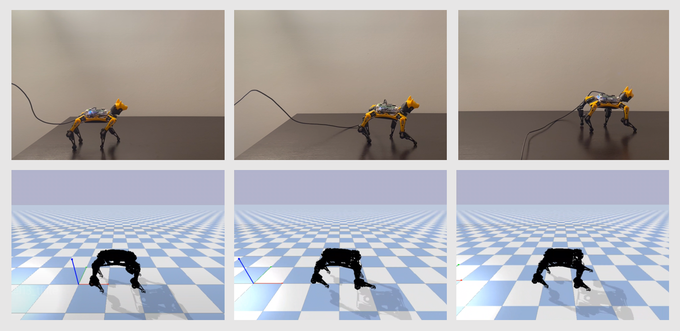

Automating robust walking gaits for legged robots has been a long-standing challenge. Previous work has achieved robust locomotion gaits on sophisticated quadruped hardware platforms through the use of reinforcement learning and imitation learning. However, these approaches do not consider the strict constraints of ultra-low-cost robot platforms with limited computing resources, few sensors, and restricted actuation. These constrained robot platforms require special attention to successfully transfer skills learned in simulation to reality. As a step toward robust learning pipelines for these constrained robot platforms, we demonstrate how existing state-of-the-art imitation learning pipelines can be modified and augmented to support low-cost, limited hardware. By reducing our model’s observational space, leveraging TinyML to quantize our model, and adjusting the model outputs through post-processing, we are able to learn and deploy successful walking gaits on an 8-DoF, $299 (USD) toy quadruped robot that has reduced actuation and sensor feedback, as well as limited computing resources. A video of our current results can be found at: https://youtu.be/jloya0TOzWA.